Deep Dive into MVI (On the Edge)

Michael Rawlinson

May 4, 2026

Since posting my first blog about Maximo Visual Inspection (MVI), I’ve been on a bit of a journey, digging deeper into its features, exploring new use cases, and discovering just how versatile this technology can be.

In this post, I want to share a more in-depth look at what MVI has to offer, the technical details and the different ways you can use it to solve real-world problems. Whether you’re curious about how MVI works under the hood, interested in deploying it on the edge, or just looking for practical examples, I’ll cover the insights and lessons I’ve picked up so far.

MVI combines image and video analytics with deep learning models to perform classification, object detection, and anomaly detection. What sets MVI apart is its accessibility, users can train models with minimal technical expertise, making advanced AI available to a broader range of organizations.

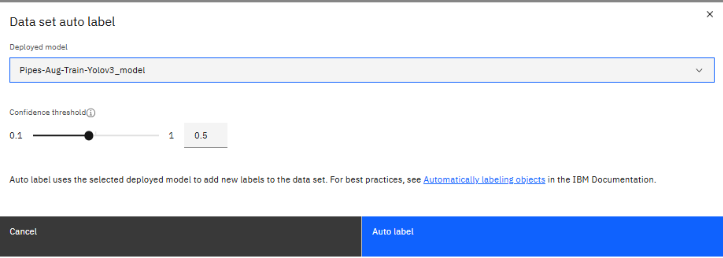

To train a model using MVI, you’ll need at least one GPU (yes, that’s the expensive part). From there, the process is straightforward:

Once trained these models are also exportable. Allowing for models to be used on multiple systems. And if you no longer need to refine your model you can leave it here and the expensive part is done.

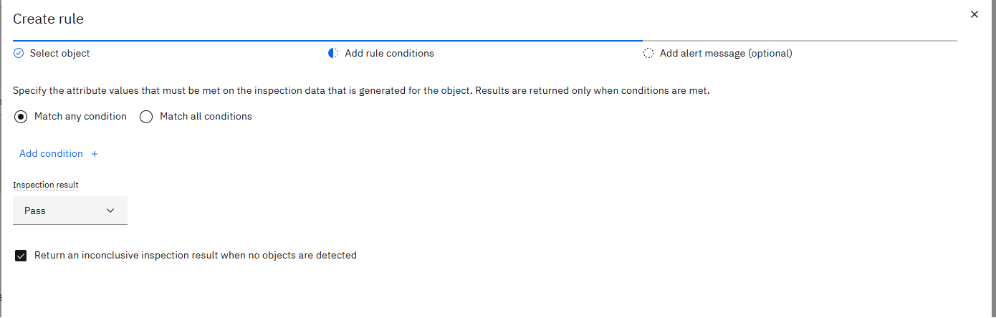

The process doesn’t stop after deployment. Here’s what you can do next:

When I attended a recent Maximo User Group, I noticed there was a bit of a misunderstanding about how MVI is used in industry. Many people seemed to think of MVI in a very specific way - imagine a camera fixed above a conveyor belt on a production line, analyzing parts in a stable, unchanging environment. And yes, that’s a classic use case, but it’s just scratching the surface.

What I’ve discovered is that MVI is far more dynamic and versatile than many realize. It’s not limited to static setups. For example, a technician can use MVI for in-person inspections, simply by snapping a photo of an asset while walking around a factory. Or, you can use live feeds from security cameras along train tracks to automatically check for obstructions. At the other end of the spectrum, you can even have drones flying over a solar farm, with MVI monitoring the footage live or analyzing it later as a batch inspection.

The general gist? MVI adapts to your needs. It’s not about fitting your process to the tool-it’s about letting the tool fit your use case, whether that’s fixed, mobile, live, or after the fact.

Edge deployment means running MVI’s AI models on devices or servers closer to the assets. A simple example is a camera linked directly to a local edge server. The camera streams data to this server with minimal latency, where the output is continuously analyzed, ready for the model to trigger actions.

Using an Edge server is surprisingly straightforward once you get started. The first step is connecting it to your MVI training server using an API key. That connection is what unlocks everything. From there, you can pull down the models you want to run locally on the Edge.

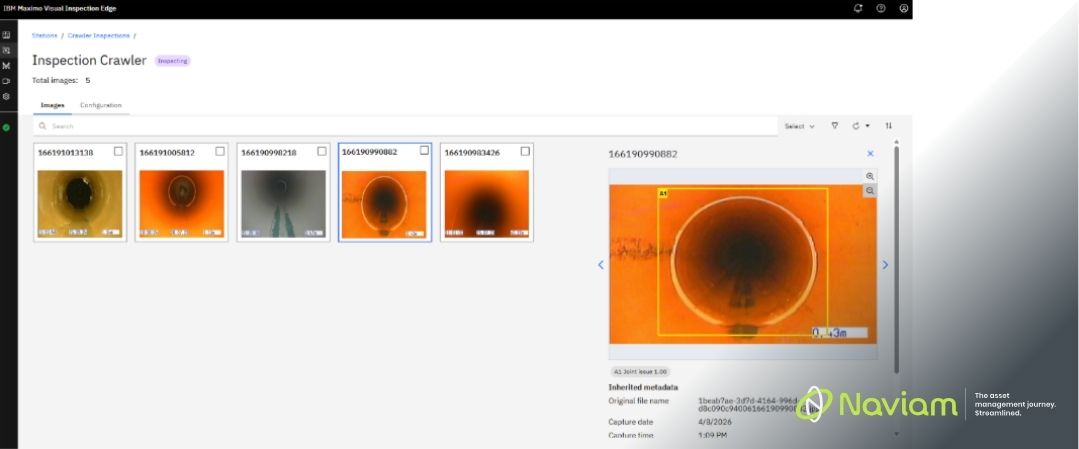

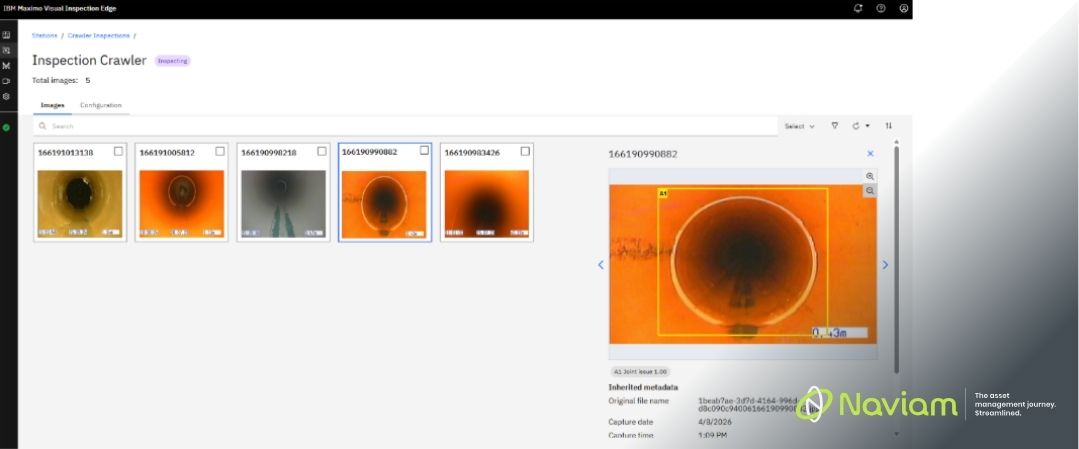

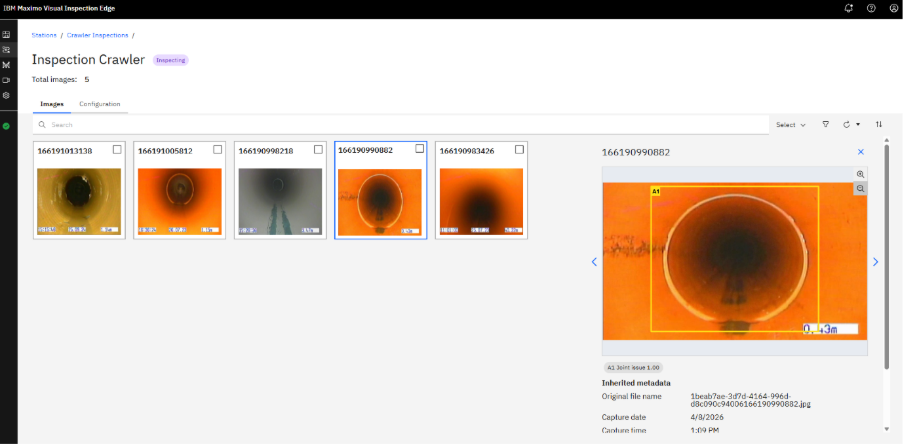

Once the models are in place, you set up your input sources. You can choose from image folders, video folders, or live camera feeds, depending on how you want to inspect your data. Whether it’s a constant live stream or a batch of images and videos dropped in as needed, the Edge server handles it cleanly and efficiently.

Next, you create a station. Think of this as a logical grouping for your inspections, keeping related results together in one place. Within a station, you then define individual inspections. Each inspection lets you decide which model to use, how it should behave, and what triggers it, whether that’s on a schedule or driven by an MQTT message.

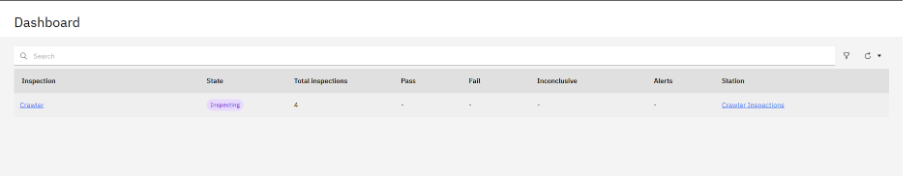

Finally, everything comes together in the dashboard. From here, you can see all your inspections at a glance and instantly understand their current state. You can track what’s been processed, review results as they come in, and quickly spot anything that needs attention.

A solar farm deploys drones equipped with edge AI to inspect panels. The drones analyze footage in real time, flagging damaged panels and sending only actionable alerts to the central Maximo system. Field technicians receive targeted work orders, minimizing manual inspection time and maximizing asset uptime.

One of the great things about edge deployment is flexibility, the servers or devices running the models can vary depending on your use case and the model’s requirements. I recently attended the UK&I MUG with Naviam there we saw a demo in which IBM had an edge server running on a piece of hardware that was nearly 15 years old, with no GPU and only 16 GB of RAM.

The rule still applies you get what you pay for. A newer server will give you better speed and reliability, but even modest hardware can handle certain workloads without breaking the bank.

MVI’s edge capabilities represent a leap forward in asset management-delivering real-time, actionable insights where they matter most. Allowing teams to train and deploy models with little to no real experience with vision models. In the next blog I will go deeper into Naviam’s solution where we bridge the gap integrating MVI inspections (Both on the Edge and Cloud) directly into MAS Manage.

Erfahren Sie alles, was Sie wissen müssen, um Ihre Vermögensverwaltungsstrategie zu modernisieren.

Darin erfährst du:

ActiveG, BPD Zenith, EAM Swiss, InterPro Solutions, Lexco, Peacock Engineering, Projetech, Sharptree, and ZNAPZ have united under one brand: Naviam.

You’ll be redirected to the most relevant page at Naviam.io in a few seconds — or you can

go now.